All in One View

Content from Overview of Google Cloud for Machine Learning and AI

Last updated on 2026-03-04 | Edit this page

Overview

Questions

- Why would I run ML/AI experiments in the cloud instead of on my laptop or an HPC cluster?

- What does GCP offer for ML/AI, and how is it organized?

- What is the “notebook as controller” pattern?

Objectives

- Identify when cloud compute makes sense for ML/AI work.

- Describe what GCP and Vertex AI provide for ML/AI researchers.

- Explain the notebook-as-controller pattern used throughout this workshop.

Why run ML/AI in the cloud?

You have ML/AI code that works on your laptop. But at some point you need more — a bigger GPU (or multiple GPUs), a dataset that won’t fit on disk, or the ability to run dozens of training experiments overnight. You could invest in local hardware or compete for time on a shared HPC cluster, but cloud platforms let you rent exactly the hardware you need, for exactly as long as you need it, and then shut it down.

Cloud vs. university HPC clusters

Most universities offer shared HPC clusters with GPUs. These are excellent resources — but they have tradeoffs worth understanding:

| Factor | University HPC | Cloud (GCP) |

|---|---|---|

| Cost | Free or subsidized | Pay per hour |

| GPU availability | Shared queue; wait times during peak periods and per-job runtime limits (often 24–72 hrs) that may require checkpointing long training runs | On-demand (subject to quota); jobs run as long as needed |

| Hardware variety | Fixed hardware refresh cycle (3–5 years) | Latest GPUs available immediately (A100, H100, L4) |

| Scaling | Limited by cluster size | Spin up hundreds of jobs in parallel |

| Multi-GPU / NVLink | Sometimes available, depends on cluster | Available on demand (e.g., A2/A3 instances with NVLink-connected multi-GPU nodes) — essential for training, fine-tuning, or serving large LLMs that don’t fit in a single GPU’s memory |

| Job orchestration | Writing scheduler scripts, packaging environments, and wiring up parallel job arrays can take days of refactoring | A few SDK calls: define a job, set hardware, call

.run() — parallelism (e.g., tuning trials) is built in |

| Software environment | Module system; some clusters support Apptainer/Singularity containers — research computing staff can often help with setup | Vertex AI provides prebuilt

containers for common ML frameworks (PyTorch, XGBoost, TensorFlow);

add extra packages via a requirements list, or bring your

own Docker image for full control |

| Power & cooling | Paid for by the university; campus data centers often spend nearly as much energy on cooling as on the computers themselves | Google’s data centers are roughly twice as energy-efficient as a typical campus facility — and power, cooling, and hardware failures are their problem, not yours |

The short version: use your university cluster when it has the hardware you need and the queue isn’t blocking you. Use the cloud when you need hardware your cluster doesn’t have, need to scale beyond what the queue allows, or need a specific software environment you can’t easily get on-campus.

Many researchers use both — develop and test on HPC, then scale to cloud for large experiments or specialized hardware. This workshop teaches the cloud side of that workflow.

When does model size justify cloud compute?

Not every model needs cloud hardware. Here’s a rough guide:

| Model scale | Parameters | Example models | Where to run |

|---|---|---|---|

| Small | < 10M | Logistic regression, small CNNs, XGBoost | Laptop or HPC — cloud adds overhead without much benefit |

| Medium | 10M–500M | ResNets, BERT-base, mid-sized transformers | HPC with a single GPU (RTX 2080 Ti, L40) or cloud (T4, L4) |

| Large | 500M–10B | GPT-2, LLaMA-7B, fine-tuning large transformers | HPC with A100 (40/80 GB) or cloud — both work well |

| Very large | 10B–70B | LLaMA-70B, Mixtral | HPC with H100/H200 (80–141 GB) or cloud multi-GPU nodes |

| Frontier | 70B+ | GPT-4-scale, multi-expert models | Cloud — requires multi-node clusters beyond what most HPC queues offer |

CHTC’s GPU Lab covers more than you might think. The GPU Lab includes A100s (40 and 80 GB), H100s (80 GB), and H200s (141 GB) — enough VRAM to run inference or fine-tune models up to ~70B parameters on a single GPU with quantization. For many UW researchers, this hardware handles “large model” workloads without needing cloud. Jobs have time limits (12 hrs for short, 24 hrs for medium, 7 days for long jobs), so plan your training runs accordingly.

Cloud becomes the clear choice when you need interconnected multi-GPU nodes (NVLink) for large distributed training, hardware beyond what the GPU Lab queue offers, or when queue wait times are blocking a deadline.

A note on cloud costs

Cloud computing is not free, but it’s worth putting costs in context:

-

Hardware is expensive and ages fast. A single A100

GPU costs ~

$15,000and is outdated within a few years. Cloud lets you rent the latest hardware by the hour. - You pay only for what you use. Stop a VM and the meter stops — valuable for bursty research workloads.

- Managed services save development time. You don’t have to build DAGs, write scheduling logic, package custom containers, or maintain orchestration infrastructure — GCP handles that plumbing so you can focus on the ML.

- Budgets and alerts keep you safe. GCP billing dashboards and budget alerts help prevent surprise bills. We cover cleanup in Episode 9.

The key habit: choose the right machine size, stop resources when idle, and monitor spending. We’ll reinforce this throughout.

For UW-Madison researchers

UW-Madison offers reduced-overhead cloud billing, NIH STRIDES

discounts, Google Cloud research credits (up to $5,000),

free on-campus GPUs via CHTC,

and dedicated support from the Public Cloud Team. See the

UW-Madison Cloud Resources

page for details.

Google Cloud Platform (GCP) is one of several clouds that supports this. The rest of this episode explains what GCP offers for ML/AI and how the pieces fit together.

What GCP provides for ML/AI

GCP gives you three things that matter for applied ML/AI research:

Flexible compute. You pick the hardware that fits your workload:

- CPUs for lightweight models, preprocessing, or feature engineering.

- GPUs (NVIDIA T4, L4, V100, A100, H100) for training deep learning models. For help choosing, see Compute for ML.

- TPUs (Tensor Processing Units) — Google’s custom hardware for matrix-heavy workloads. TPUs work best with TensorFlow and JAX; PyTorch support is improving but still less mature.

Scalable storage. Google Cloud Storage (GCS) buckets give you a place to store datasets, scripts, and model artifacts that any job or notebook can access. Think of it as a shared filesystem for your project.

Managed ML/AI services. Vertex AI is Google’s ML/AI platform. It wraps compute, storage, and tooling into a set of services designed for ML/AI workflows — managed notebooks, training jobs, hyperparameter tuning, model hosting, and access to foundation models like Gemini.

How the pieces fit together: Vertex AI

Google Cloud has many products and brand names. Here are the ones you’ll use in this workshop and how they relate:

| Term | What it is |

|---|---|

| GCP | Google Cloud Platform — the overall cloud: compute, storage, networking. |

| Vertex AI | Google’s ML platform — notebooks, training jobs, tuning, model hosting. Everything below lives under this umbrella. |

| Workbench | Managed Jupyter notebooks that run on a Compute Engine VM. Your interactive environment. |

| Training & tuning jobs | How you run code on Vertex AI hardware. You submit a script and a

machine spec; Vertex AI provisions the VM, runs it, and shuts it down.

The SDK offers several flavors — CustomTrainingJob (Ep

4–5), HyperparameterTuningJob (Ep 6) — and the CLI

equivalent is gcloud ai custom-jobs (Ep 8). |

| Cloud Storage (GCS) | Object storage for files. Similar to AWS S3. |

| Compute Engine | Virtual machines you configure with CPUs, GPUs, or TPUs. Workbench and training jobs run on Compute Engine under the hood. |

| Gemini | Google’s family of large language models, accessed through the Vertex AI API. |

For a full list of terms, see the Glossary.

The notebook-as-controller pattern

The central idea of this workshop is simple: you work in a lightweight Vertex AI Workbench notebook — a small, cheap VM — and use the Vertex AI Python SDK to dispatch work to managed services. The notebook itself does not run heavy compute. Instead, it orchestrates:

- Training jobs (Eps 4–5) — run your script on auto-provisioned GPU hardware, then shut down when complete.

- Hyperparameter tuning jobs (Ep 6) — search a parameter space across parallel trials and return the best configuration.

- Cloud Storage (Ep 3) — shared persistent storage for datasets, model artifacts, logs, and results.

- Gemini API (Ep 7) — embeddings and generation for Retrieval-Augmented Generation (RAG) pipelines.

All of these are accessed via SDK calls from the notebook. This keeps costs low (the notebook VM stays small) and keeps your work reproducible (each job is a clean, logged run on dedicated hardware).

Console, notebooks, or CLI — your choice

This workshop uses the GCP web console and

Workbench notebooks for most tasks because they’re

visual and easy to follow for beginners. But nearly everything we do can

also be done from the gcloud command-line

tool — submitting training jobs, managing buckets, checking

quotas. Episode 8 covers the CLI

equivalents. If you prefer terminal-based workflows or need to automate

jobs in scripts and CI/CD pipelines, that episode shows you how.

One important caveat: whether you use the console, notebooks, or CLI, resources you create (VMs, training jobs, endpoints) keep running and billing until you explicitly stop them. There’s no automatic shutdown. We cover cleanup habits in Episode 9, but the short version is: always check for running resources before you walk away.

Your current setup

Think about how you currently run ML experiments:

- What hardware do you use — laptop, HPC cluster, cloud?

- What’s the biggest infrastructure pain point in your workflow (GPU access, environment setup, data transfer, cost)?

- What would you most like to offload to a managed service?

Take 3–5 minutes to discuss with a partner or share in the workshop chat.

- Cloud platforms let you rent hardware on demand instead of buying or waiting for shared resources.

- GCP organizes its ML/AI services under Vertex AI — notebooks, training jobs, tuning, and model hosting.

- The notebook-as-controller pattern keeps your notebook cheap while offloading heavy training to dedicated Vertex AI jobs.

- Everything in this workshop can also be done from the

gcloudCLI (Episode 8).

Content from Notebooks as Controllers

Last updated on 2026-03-05 | Edit this page

Overview

Questions

- How do you set up and use Vertex AI Workbench notebooks for machine

learning tasks?

- How can you manage compute resources efficiently using a “controller” notebook approach in GCP?

Objectives

- Describe how Vertex AI Workbench notebooks fit into ML/AI workflows on GCP.

- Set up a Jupyter-based Workbench Instance as a lightweight controller to manage compute tasks.

- Configure a Workbench Instance with appropriate machine type, labels, and idle shutdown for cost-efficient orchestration.

Setting up our notebook environment

Google Cloud Workbench provides JupyterLab-based environments that can be used to orchestrate ML/AI workflows. In this workshop, we will use a Workbench Instance—the recommended option going forward, as other Workbench environments are being deprecated.

Workbench Instances come with JupyterLab 3 pre-installed and are configured with GPU-enabled ML frameworks (TensorFlow, PyTorch, etc.), making it easy to start experimenting without additional setup. Learn more in the Workbench Instances documentation.

Using the notebook as a controller

The notebook instance functions as a controller to manage

more resource-intensive tasks. By selecting a modest machine type (e.g.,

n2-standard-2), you can perform lightweight operations

locally in the notebook while using the Vertex AI Python

SDK to launch compute-heavy jobs on larger machines (e.g.,

GPU-accelerated) when needed.

This approach minimizes costs while giving you access to scalable infrastructure for demanding tasks like model training, batch prediction, and hyperparameter tuning.

One practical advantage of Workbench notebooks:

authentication is automatic. A Workbench VM inherits

the permissions of its attached service account, so calls to Cloud

Storage, Vertex AI, and the Gemini API work with no extra credential

setup — no API keys or login commands needed. If you later run the same

code from your laptop or an HPC cluster, you’ll need to set up

credentials separately (see the GCP authentication

docs). (Prefer working from a terminal? Episode 8: CLI Workflows covers how to

do everything in this workshop using gcloud commands

instead of notebooks.)

We will follow these steps to create our first Workbench Instance:

1. Navigate to Workbench

- Open the Google Cloud Console (console.cloud.google.com) — this is the web dashboard where you manage all GCP resources. Search for “Workbench.”

- Click the “Instances” tab (this is the supported path going forward).

2. Create a new Workbench Instance

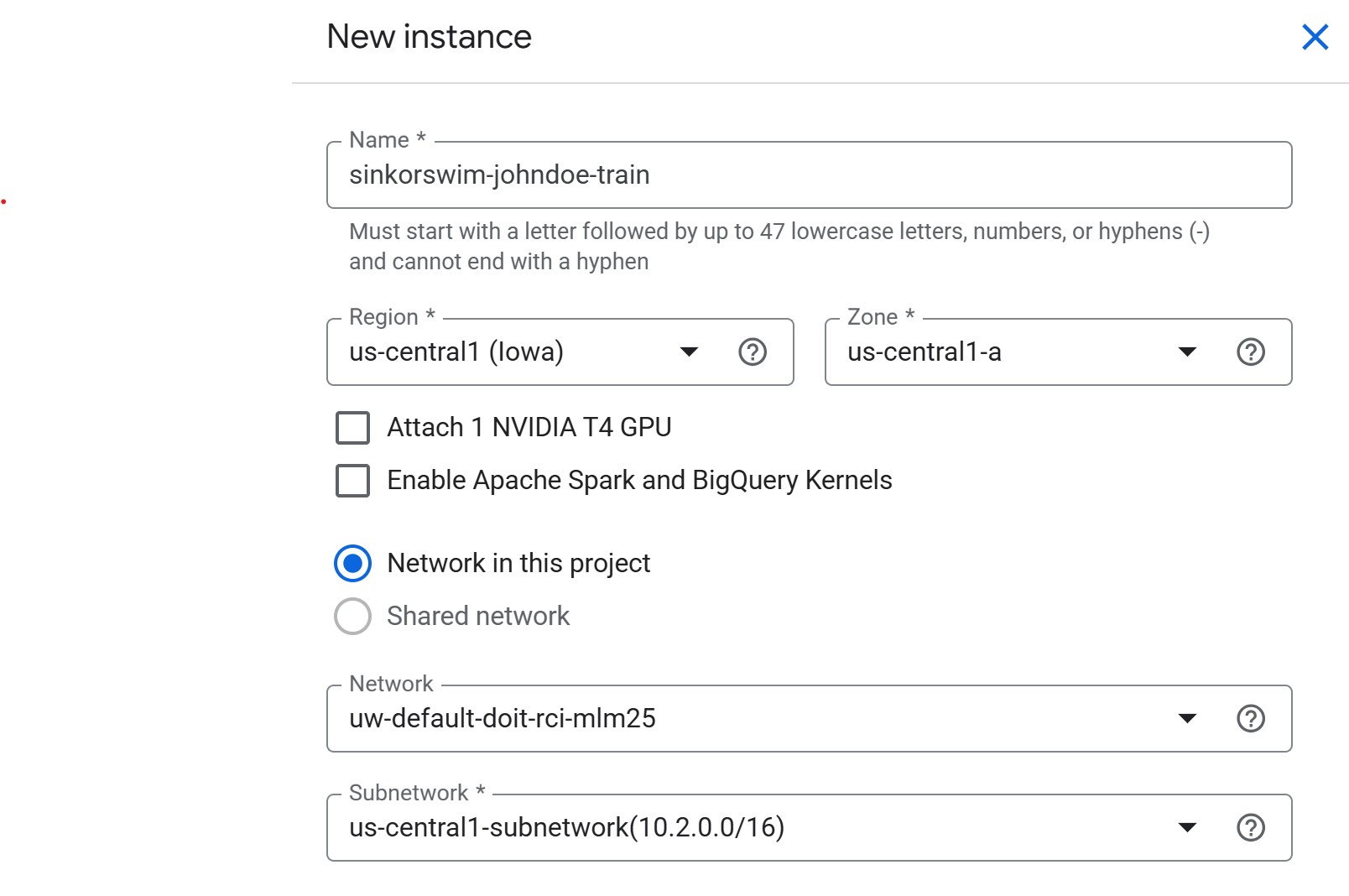

Initial settings

- Click Create New near the top of the Workbench page

-

Name: Use the convention

lastname-purpose(e.g.,doe-workshop). GCP resource names only allow lowercase letters, numbers, and hyphens. We’ll use a single instance for training, tuning, RAG, and more, soworkshopis a good general-purpose label. -

Region: Select

us-central1. When we create a storage bucket in Episode 3, we’ll use the same region — keeping compute and storage co-located avoids cross-region transfer charges and keeps data access fast. -

Zone:

us-central1-a(or another zone inus-central1, like-bor-c)- If capacity or GPU availability is limited in one zone, switch to another zone in the same region.

-

NVIDIA T4 GPU: Leave unchecked for now

- We will request GPUs for training jobs separately. Attaching here increases idle costs.

-

Apache Spark and BigQuery Kernels: Leave unchecked

- BigQuery kernels let you run SQL analytics directly in a notebook, but we won’t need them in this workshop. Leave unchecked to avoid pulling extra container images.

- Network in this project: If you’re working in a shared workshop environment, select the network provided by your administrator (shared environments typically do not allow using external or default networks). If using a personal GCP project, the default network is fine.

-

Network / Subnetwork: Leave as pre-filled.

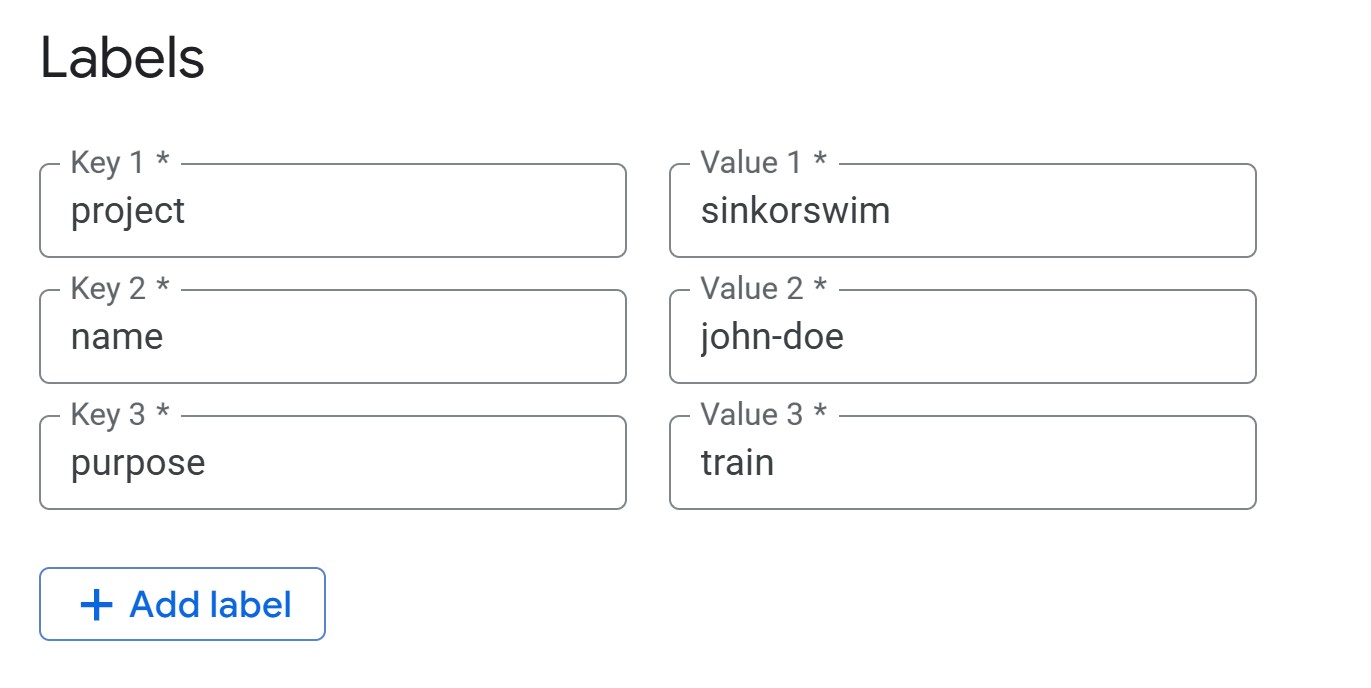

Advanced settings: Details (tagging)

-

IMPORTANT: Open the “Advanced options” menu next.

-

Labels (required for cost tracking): Under the

Details menu, add the following tags (all lowercase) so that you can

track the total cost of your activity on GCP later:

name = firstname-lastnamepurpose = workshop

-

Labels (required for cost tracking): Under the

Details menu, add the following tags (all lowercase) so that you can

track the total cost of your activity on GCP later:

Advanced Settings: Environment

Leave environment settings at their defaults for this workshop.

Workbench uses JupyterLab 3 by default with NVIDIA GPU drivers, CUDA,

and common ML frameworks preinstalled. For future reference, you can

optionally select JupyterLab 4, provide a custom Docker image, or

specify a post-startup script (gs://path/to/script.sh) to

auto-configure the instance at boot.

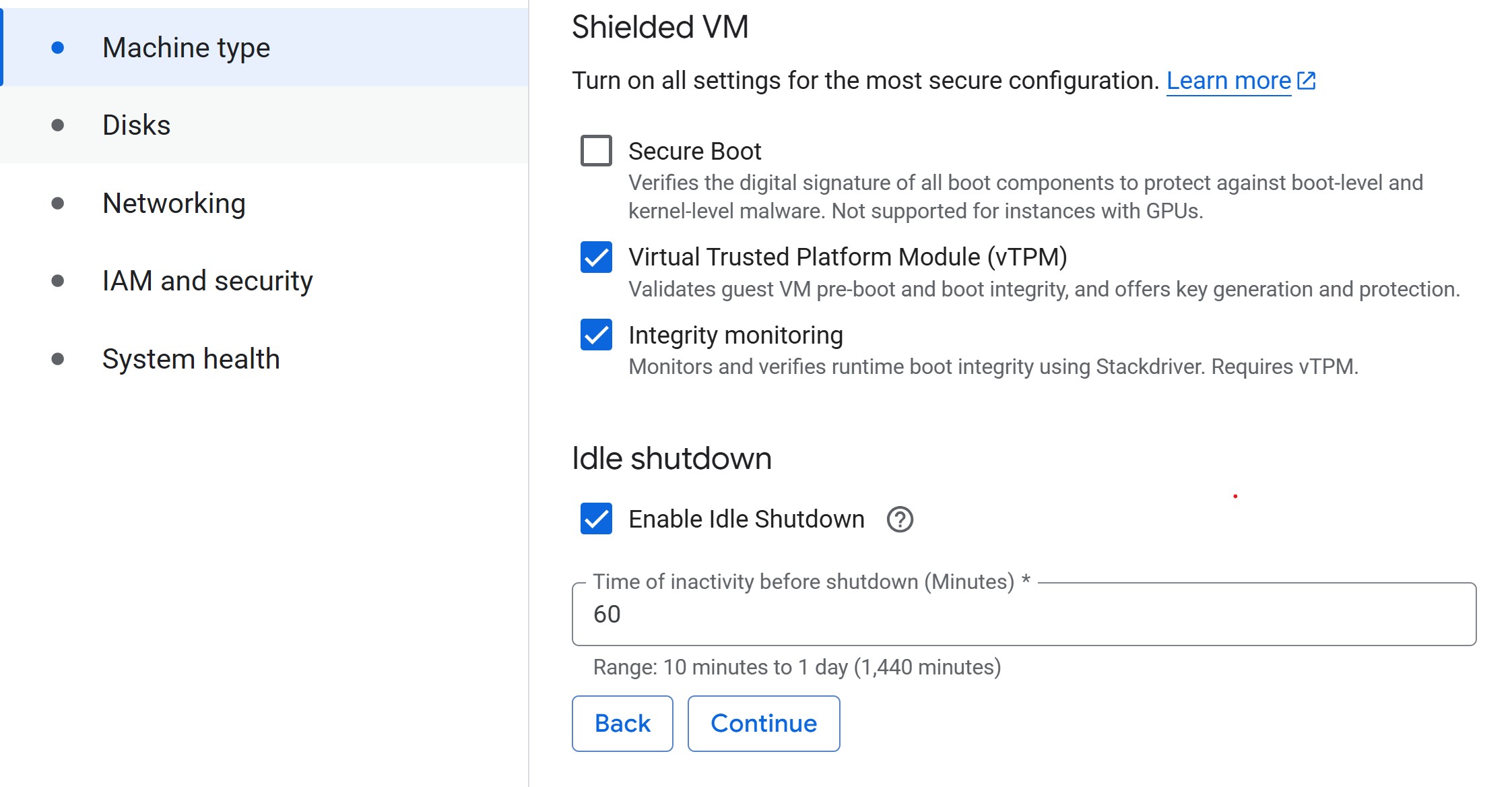

Advanced settings: Machine Type

-

Machine type: Select a small machine (e.g.,

n2-standard-2, ~$0.07/hr) to act as the controller.- This keeps costs low while you delegate heavy lifting to training jobs.

- For guidance on common machine types and their costs, see Compute for ML. For help deciding when you need cloud hardware at all, see When does model size justify cloud compute? in Episode 1.

- Set idle shutdown: To save on costs when you aren’t doing anything in your notebook, lower the default idle shutdown time to 60 (minutes).

Advanced Settings: Disks

Leave disk settings at their defaults for this workshop. Each Workbench Instance has two disks: a boot disk (100 GB — holds the OS and libraries) and a data disk (150 GB default — holds your datasets and outputs). Both use Balanced Persistent Disks. Keep “Delete to trash” unchecked so deleted files free space immediately.

Rule of thumb: allocate ≈ 2× your dataset size for

the data disk, and keep bulk data in Cloud Storage (gs://)

rather than on local disk — PDs cost ~ $0.10/GB/month vs. ~

$0.02/GB/month for Cloud Storage.

Disk sizing and cost details

- Boot disk: Rarely needs resizing. Increase to 150–200 GB only for large custom environments or multiple frameworks.

- Data disk: Use SSD PD only for high-I/O workloads. Disks can be resized anytime without downtime, so start small and expand when needed.

-

Cost comparison: A 200 GB dataset costs ~

$24/month on a PD but only ~$5/month in Cloud Storage. - Pricing: Persistent Disk pricing · Cloud Storage pricing

Create notebook

- Click Create to create the instance. Provisioning typically takes 3–5 minutes. You’ll see the status change from “Provisioning” to “Active” with a green checkmark. While waiting, work through the challenges below.

Challenge 1: Notebook Roles

Your university provides different compute options: laptops, on-prem HPC, and GCP.

- What role does a Workbench Instance notebook play compared to an HPC login node or a laptop-based JupyterLab?

- Which tasks should stay in the notebook (lightweight control, visualization) versus being launched to larger cloud resources?

The notebook serves as a lightweight control plane.

- Like an HPC login node, it is not meant for heavy computation.

- Suitable for small preprocessing, visualization, and orchestrating jobs.

- Resource-intensive tasks (training, tuning, batch jobs) should be submitted to scalable cloud resources (GPU/large VM instances) via the Vertex AI SDK.

Challenge 2: Controller Cost Estimate

Your controller notebook uses an n2-standard-2 instance

(~ $0.07/hr — see Compute for

ML for other common machine types and costs).

- Estimate the monthly cost if you use it 8 hours/day, 5 days/week, with idle shutdown enabled.

- Compare that to leaving it running 24/7 for the same month.

-

With idle shutdown: 8 hrs × 5 days × 4 weeks = 160

hrs → 160 ×

$0.07≈$11.20/month -

Running 24/7: 24 hrs × 30 days = 720 hrs → 720 ×

$0.07≈$50.40/month - Idle shutdown saves you ~

$39/month on a single small controller instance. The savings are even larger for bigger machine types.

Managing your instance

You don’t have to wait for idle shutdown — you can manually stop your instance anytime from the Workbench Instances list by selecting the checkbox and clicking Stop. To resume work, click Start. You only pay for compute while the instance is running (disk charges continue while stopped).

To permanently remove an instance, select it and click Delete. Full cleanup is covered in Episode 9.

Managing training and tuning with the controller notebook

In the following episodes, we will use the Vertex AI Python

SDK (google-cloud-aiplatform) from this notebook

to submit compute-heavy tasks on more powerful machines. Examples

include:

- Training a model on a GPU-backed instance.

- Running hyperparameter tuning jobs managed by Vertex AI.

Here’s how the notebook, jobs, and storage connect:

This pattern keeps costs low by running your notebook on a modest VM while only incurring charges for larger resources when they are actively in use.

You don’t need a notebook to use Vertex AI

We start with Vertex AI Workbench notebooks because they give you authenticated access to buckets, training jobs, and other GCP services out of the box — no credential setup required. The Console UI also lets you see and manage running jobs directly, which matters when you’re learning: accidentally submitting a duplicate training job is easy to spot and cancel in the Console, harder to notice from a terminal.

Episode 8 introduces the

gcloud CLI once these concepts are

familiar. Notebooks are not required for any of the

workflows covered here — everything we do through the Python SDK can

also be done from:

- A plain Python script run from your terminal or an HPC scheduler.

- The

gcloudCLI (e.g.,gcloud ai custom-jobs create ...). - A CI/CD pipeline (GitHub Actions, Cloud Build, etc.).

The real work happens in the training scripts and SDK calls — the notebook is just a convenient starting point.

Troubleshooting

-

VM stuck in “Provisioning” for more than 5 minutes?

Try deleting the instance and re-creating it in a different zone within

the same region (e.g.,

us-central1-binstead ofus-central1-a). - Instance stopped unexpectedly? Check the idle shutdown setting — it may have timed out. Restart from the Instances list by clicking Start.

- Can’t see the project or get permission errors? Ensure you’re signed into the correct Google account and that IAM permissions have propagated (this can take a few minutes after initial setup).

Load pre-filled Jupyter notebooks

Once your instance shows as “Active” (green checkmark), click Open JupyterLab. From the Launcher, select Python 3 (ipykernel) under Notebook to create a new notebook — we don’t need the TensorFlow or PyTorch kernels yet, as those are used in later episodes for training jobs.

Run the following command to clone the lesson repository. This contains pre-filled notebooks for each episode and the training scripts we’ll use later, so you won’t need to write boilerplate code from scratch.

Then, navigate to

/Intro_GCP_for_ML/notebooks/03-Data-storage-and-access.ipynb

to begin the next episode.

- Use a small Workbench Instance as a controller — delegate heavy training to Vertex AI jobs.

- Workbench VMs inherit service account permissions automatically, simplifying authentication.

- Choose the same region for your Workbench Instance and storage bucket to avoid extra transfer costs.

- Apply labels to all resources for cost tracking, and enable idle auto-stop to avoid surprise charges.

Content from Data Storage and Access

Last updated on 2026-03-05 | Edit this page

Overview

Questions

- How can I store and manage data effectively in GCP for Vertex AI workflows?

- What are the advantages of Google Cloud Storage (GCS) compared to local or VM storage for machine learning projects?

- How can I load data from GCS into a Vertex AI Workbench notebook?

Objectives

- Explain data storage options in GCP for machine learning projects.

- Set up a GCS bucket and upload data.

- Read data directly from a GCS bucket into memory in a Vertex AI notebook.

- Monitor storage usage and estimate costs.

- Upload new files from the Vertex AI environment back to the GCS bucket.

ML/AI projects rely on data, making efficient storage and management essential. Google Cloud offers several storage options, but the most common for ML/AI workflows are Virtual Machine (VM) disks and Google Cloud Storage (GCS) buckets.

Consult your institution’s IT before handling sensitive data in GCP

As with AWS, do not upload restricted or sensitive data to GCP services unless explicitly approved by your institution’s IT or cloud security team. For regulated datasets (HIPAA, FERPA, proprietary), work with your institution to ensure encryption, restricted access, and compliance with policies.

Options for storage: VM Disks or GCS

What is a VM disk?

A VM disk is the storage volume attached to a Compute Engine VM or a Vertex AI Workbench notebook. It can store datasets and intermediate results, but it is tied to the lifecycle of the VM.

When to store data directly on a VM disk

- Useful for small, temporary datasets processed interactively.

- Data persists if the VM is stopped, but storage costs continue as long as the disk exists.

- Not ideal for collaboration, scaling, or long-term dataset storage.

Limitations of VM disk storage

- Scalability: Limited by disk size quota.

- Sharing: Harder to share across projects or team members.

- Cost: More expensive per GB compared to GCS for long-term storage.

What is a GCS bucket?

For most ML/AI workflows in GCP, Google Cloud Storage (GCS)

buckets are recommended. A GCS bucket is a container in

Google’s object storage service where you can store an essentially

unlimited number of files. Data in GCS can be accessed from Vertex AI

training jobs, Workbench notebooks, and other GCP services using a

GCS URI (e.g.,

gs://your-bucket-name/your-file.csv). Think of GCS URIs as

cloud file paths — you’ll use them throughout the workshop to reference

data in training scripts, notebooks, and SDK calls.

Benefits of using GCS (recommended for ML/AI workflows)

- Separation of storage and compute: Data remains available even if VMs or notebooks are deleted.

- Easy sharing: Buckets can be accessed by collaborators with the right IAM roles.

- Integration with Vertex AI and BigQuery: Read and write data directly using other GCP tools.

- Scalability: Handles datasets of any size without disk limits.

- Cost efficiency: Lower cost than persistent disks (VM storage) for long-term storage.

- Data persistence: Durable and highly available across regions.

- Filesystem mounting: GCS buckets can be mounted as local directories using Cloud Storage FUSE, making them accessible like regular filesystems for tools that expect local file paths.

Creating a GCS bucket

1. Sign in to Google Cloud Console

- Go to console.cloud.google.com and log in with your credentials.

- Select your project from the project dropdown at the top of the page. If you’re using the shared workshop project, the instructor will provide the project name.

3. Create a new bucket

Click Create bucket and configure the following settings:

Bucket name: Enter a globally unique name using the convention

lastname-dataname(e.g.,doe-titanic).-

Labels: Add cost-tracking labels (same keys you used for the Workbench Instance in Episode 2, plus a

datasettag):name = firstname-lastnamepurpose = workshopdataset = titanic

In shared accounts, labels are mandatory.

Location: Choose Region →

us-central1(same region as your compute to avoid egress charges).Storage class: Standard (best for active ML/AI workflows).

Access control: Uniform (simpler IAM-based permissions).

Protection: Leave default soft delete enabled; skip versioning and retention policies.

Click Create if everything looks good.

4. Upload files to the bucket

- If you haven’t yet, download the data for this workshop (Right-click

→ Save as): data.zip

- Extract the zip folder contents (Right-click → Extract all on Windows; double-click on macOS).

- The zip contains the Titanic dataset — passenger information (age, class, fare, etc.) with a survival label. This is a classic binary classification task we’ll use for training in later episodes.

- In the bucket dashboard, click Upload Files.

- Select your Titanic CSVs (

titanic_train.csvandtitanic_test.csv) and upload.

Note the GCS URI for your data After uploading,

click on a file and find its gs:// URI (e.g.,

gs://doe-titanic/titanic_test.csv). This URI will be used

to access the data in your notebook.

Adjust bucket permissions

Your bucket exists, but your notebooks and training jobs don’t automatically have permission to use it. GCP follows the principle of least privilege — services only get the access you explicitly grant. In this section we’ll find the service account that Vertex AI uses and give it the right roles on your bucket.

Check your project ID

First, confirm which project your notebook is connected to. Run this cell in your Workbench notebook:

Copy the output — you’ll paste it into Cloud Shell commands below.

These commands run in Cloud Shell, not in a notebook

Open Cloud Shell — a browser-based terminal built into the Google Cloud Console (click the >_ icon in the top-right toolbar). Copy the commands below and paste them into that terminal.

Set your project

If Cloud Shell doesn’t already know your project, set it first:

Replace YOUR_PROJECT_ID with the project ID you copied

above. For the shared MLM25 workshop the project ID is

doit-rci-mlm25-4626.

Find your service account

When you create a GCP project, Google automatically provisions a Compute Engine default service account. This is the identity that Vertex AI Workbench notebooks and training jobs use when they call other GCP services (like Cloud Storage). By default this account may not have access to your bucket, so we need to grant it the right IAM roles explicitly.

First, look up the service account email:

SH

gcloud iam service-accounts list --filter="displayName:Compute Engine default service account" --format="value(email)"This will return an email like

123456789-compute@developer.gserviceaccount.com. Copy it —

you’ll paste it into the commands below.

Grant permissions

Now we give that service account the ability to read from and write to your bucket. Without these roles, your notebooks would get “Access Denied” errors when trying to load training data or save model artifacts.

Replace YOUR_BUCKET_NAME and

YOUR_SERVICE_ACCOUNT, then run:

SH

# objectViewer — lets notebooks READ data (e.g., load CSVs for training)

gcloud storage buckets add-iam-policy-binding gs://YOUR_BUCKET_NAME \

--member="serviceAccount:YOUR_SERVICE_ACCOUNT" \

--role="roles/storage.objectViewer"

# objectCreator — lets training jobs WRITE outputs (e.g., saved models, logs)

gcloud storage buckets add-iam-policy-binding gs://YOUR_BUCKET_NAME \

--member="serviceAccount:YOUR_SERVICE_ACCOUNT" \

--role="roles/storage.objectCreator"

# objectAdmin — adds OVERWRITE and DELETE (only needed if you want to

# re-run jobs that replace existing files or clean up old artifacts)

gcloud storage buckets add-iam-policy-binding gs://YOUR_BUCKET_NAME \

--member="serviceAccount:YOUR_SERVICE_ACCOUNT" \

--role="roles/storage.objectAdmin"

gcloud storage

vs. gsutil

Older tutorials often reference gsutil for Cloud Storage

operations. Google now recommends gcloud storage as the

primary CLI. Both work, but gcloud storage is actively

maintained and consistent with the rest of the gcloud

CLI.

Data transfer & storage costs

GCS costs are based on three things: storage class (how you store data), data transfer (moving data in or out of GCP), and operations (API requests). Operations are the individual actions your code performs against Cloud Storage — every time a notebook reads a file or a training job writes a model, that’s an API request.

-

Standard storage: ~

$0.02per GB per month inus-central1. - Uploading data (ingress): Free.

-

Downloading data out of GCP (egress): ~

$0.12per GB. -

Cross-region access: ~

$0.01–$0.02per GB within North America. -

GET requests (reading/downloading objects): ~

$0.004per 10,000 requests. -

PUT/POST requests (creating/uploading objects): ~

$0.05per 10,000 requests. - Deleting data: Free (but Nearline/Coldline/Archive early-deletion fees apply).

For detailed pricing, see GCS Pricing Information.

Challenge 1: Estimating Storage Costs

1. Estimate the total cost of storing 1 GB in GCS Standard storage (us-central1) for one month assuming: - Dataset read from the bucket 100 times within GCP (e.g., each training or tuning run fetches the data via a GET request — this stays inside Google’s network, so no egress charge) - Data is downloaded once out of GCP to your laptop at the end of the project (this does incur an egress charge)

2. Repeat the above calculation for datasets of 10 GB, 100 GB, and 1 TB (1024 GB).

Hints: Storage $0.02/GB/month, Egress

$0.12/GB, GET requests negligible at this scale.

-

1 GB: Storage

$0.02+ Egress$0.12=$0.14 -

10 GB:

$0.20+$1.20=$1.40 -

100 GB:

$2.00+$12.00=$14.00 -

1 TB:

$20.48+$122.88=$143.36

Accessing data from your notebook

Now that our bucket is set up, let’s use it from the Workbench notebook you created in the previous episode.

If you haven’t already cloned the repository, open JupyterLab from

your Workbench Instance and run

!git clone https://github.com/qualiaMachine/Intro_GCP_for_ML.git.

Then navigate to

/Intro_GCP_for_ML/notebooks/03-Data-storage-and-access.ipynb.

Set up GCP environment

If you haven’t already, initialize the storage client (same code from

the permissions section earlier). The storage.Client() call

creates a connection using the credentials already attached to your

Workbench VM.

Reading data directly into memory

The code below downloads a CSV from your bucket and loads it into a

pandas DataFrame. The blob.download_as_bytes() call pulls

the file contents as raw bytes, and io.BytesIO wraps those

bytes in a file-like object that pd.read_csv can read — no

temporary file on disk needed.

PYTHON

import pandas as pd

import io

bucket_name = "doe-titanic" # ADJUST to your bucket's name

bucket = client.bucket(bucket_name)

blob = bucket.blob("titanic_train.csv")

train_data = pd.read_csv(io.BytesIO(blob.download_as_bytes()))

print(train_data.shape)

train_data.head()The Titanic dataset contains passenger information (age, class, fare, etc.) and a binary survival label — we’ll train a classifier on this data in Episode 4.

Alternative: reading directly with pandas

Vertex AI Workbench comes with gcsfs pre-installed,

which lets pandas read GCS URIs directly — no BytesIO

conversion needed:

This is convenient for quick exploration. We use the

storage.Client approach above because it gives you more

control (listing blobs, checking sizes, uploading), which you’ll need in

the sections that follow.

Common errors

-

Forbidden (403)— Your service account lacks permission. Revisit the Adjust bucket permissions section above. -

NotFound (404)— The bucket name or file path is wrong. Double-checkbucket_nameand the blob path withclient.list_blobs(bucket_name). -

DefaultCredentialsError— The notebook cannot find credentials. Make sure you are running on a Vertex AI Workbench Instance (not a local machine).

Monitoring storage usage and costs

It’s good practice to periodically check how much storage your bucket is using. The code below sums up all object sizes.

PYTHON

total_size_bytes = 0

bucket = client.bucket(bucket_name)

for blob in client.list_blobs(bucket_name):

total_size_bytes += blob.size

total_size_mb = total_size_bytes / (1024**2)

print(f"Total size of bucket '{bucket_name}': {total_size_mb:.2f} MB")PYTHON

storage_price_per_gb = 0.02 # $/GB/month for Standard storage

egress_price_per_gb = 0.12 # $/GB for internet egress (same-region transfers are free)

total_size_gb = total_size_bytes / (1024**3)

monthly_storage = total_size_gb * storage_price_per_gb

egress_cost = total_size_gb * egress_price_per_gb

print(f"Bucket size: {total_size_gb:.4f} GB")

print(f"Estimated monthly storage cost: ${monthly_storage:.4f}")

print(f"Estimated annual storage cost: ${monthly_storage*12:.4f}")

print(f"One-time full download (egress) cost: ${egress_cost:.4f}")Writing output files to GCS

PYTHON

# Create a sample file locally on the notebook VM

file_path = "/home/jupyter/Notes.txt"

with open(file_path, "w") as f:

f.write("This is a test note for GCS.")PYTHON

bucket = client.bucket(bucket_name)

blob = bucket.blob("docs/Notes.txt")

blob.upload_from_filename(file_path)

print("File uploaded successfully.")List bucket contents:

Challenge 2: Read and explore the test dataset

Read titanic_test.csv from your GCS bucket and display

its shape. How does the test set compare to the training set in size and

columns?

PYTHON

blob = client.bucket(bucket_name).blob("titanic_test.csv")

test_data = pd.read_csv(io.BytesIO(blob.download_as_bytes()))

print("Test shape:", test_data.shape)

print("Train shape:", train_data.shape)

print("Same columns?", list(test_data.columns) == list(train_data.columns))

test_data.head()Both datasets share the same 12 columns (including

Survived). The test set is a smaller held-out subset (179

rows vs 712 in training) — roughly an 80/20 split used for final

evaluation after the model is trained.

Challenge 3: Upload a summary CSV to GCS

Using train_data, compute the survival rate by passenger

class (Pclass) and upload the result as

results/survival_by_class.csv to your bucket.

PYTHON

summary = train_data.groupby("Pclass")["Survived"].mean().reset_index()

summary.columns = ["Pclass", "SurvivalRate"]

print(summary)

# Save locally then upload

summary.to_csv("/home/jupyter/survival_by_class.csv", index=False)

blob = client.bucket(bucket_name).blob("results/survival_by_class.csv")

blob.upload_from_filename("/home/jupyter/survival_by_class.csv")

print("Summary uploaded to GCS.")Removing unused data (complete after the workshop)

After you are done using your data, remove unused files/buckets to stop costs.

You can delete files programmatically. Let’s clean up the notes file we uploaded earlier:

PYTHON

blob = client.bucket(bucket_name).blob("docs/Notes.txt")

blob.delete()

print("docs/Notes.txt deleted.")Verify it’s gone:

For larger clean-up tasks, use the Cloud Console:

- Delete files only – In your bucket, select the files you want to remove and click Delete.

- Delete the bucket entirely – In Cloud Storage > Buckets, select your bucket and click Delete.

For a detailed walkthrough of cleaning up all workshop resources, see Episode 9: Resource Management and Cleanup.

- Use GCS for scalable, cost-effective, and persistent storage in GCP.

- Persistent disks are suitable only for small, temporary datasets.

- Load data from GCS into memory with

storage.Clientor directly viapd.read_csv("gs://..."). - Periodically check storage usage and estimate costs to manage your GCS budget.

- Track your storage, transfer, and request costs to manage expenses.

- Regularly delete unused data or buckets to avoid ongoing costs.

Content from Training Models in Vertex AI: Intro

Last updated on 2026-03-05 | Edit this page

Overview

Questions

- What are the differences between training locally in a Vertex AI

notebook and using Vertex AI-managed training jobs?

- How do custom training jobs in Vertex AI streamline the training

process for various frameworks?

- How does Vertex AI handle scaling across CPUs, GPUs, and TPUs?

Objectives

- Understand the difference between local training in a Vertex AI

Workbench notebook and submitting managed training jobs.

- Learn to configure and use Vertex AI custom training jobs for

different frameworks (e.g., XGBoost, PyTorch, SKLearn).

- Understand scaling options in Vertex AI, including when to use CPUs,

GPUs, or TPUs.

- Compare performance, cost, and setup between custom scripts and

pre-built containers in Vertex AI.

- Conduct training with data stored in GCS and monitor training job status using the Google Cloud Console.

Cost awareness: training jobs

Training jobs bill per VM-hour while the job is running. An

n1-standard-4 (CPU) costs ~ $0.19/hr; adding a

T4 GPU brings the total to ~ $0.54/hr. Jobs automatically

stop (and stop billing) when the script finishes. For a complete cost

reference, see the Compute for ML page

and the cost table in Episode 9.

Here’s the architecture we introduced in Episode 2 — your lightweight notebook orchestrates training jobs that run on separate, more powerful VMs, with all artifacts stored in GCS:

Initial setup

1. Open pre-filled notebook

Navigate to

/Intro_GCP_for_ML/notebooks/04-Training-models-in-VertexAI.ipynb

to begin this notebook.

2. CD to instance home directory

To ensure we’re all in the same starting spot, change directory to your Jupyter home directory.

3. Set environment variables

This code initializes the Vertex AI environment by importing the Python SDK, setting the project, region, and defining a GCS bucket for input/output data.

-

PROJECT_ID: Identifies your GCP project.

-

REGION: Determines where training jobs run (choose a region close to your data).

PYTHON

from google.cloud import storage

client = storage.Client()

PROJECT_ID = client.project

REGION = "us-central1"

BUCKET_NAME = "doe-titanic" # ADJUST to your bucket's name

LAST_NAME = "DOE" # ADJUST to your last name or name

print(f"project = {PROJECT_ID}\nregion = {REGION}\nbucket = {BUCKET_NAME}")How does storage.Client() know

your project?

When you call storage.Client() without arguments, the

library automatically discovers your credentials and project ID. This

works because Vertex AI Workbench VMs run on Google Compute Engine,

which provides a metadata server at a known internal

address. The client library queries this server to retrieve the project

ID and a service-account token — no keys or config files needed. If you

ran the same code on your laptop, you would need to authenticate first

with gcloud auth application-default login (see Episode 8 for details).

Testing train_xgboost.py locally in the notebook

Before submitting a managed training job to Vertex AI, let’s first examine and test the training script on our notebook VM. This ensures the code runs without errors before we spend money on cloud compute.

One script, two environments

A key design goal of train_xgboost.py is that the

same script runs unchanged on your laptop, inside a

Workbench notebook, and as a Vertex AI managed training job. Two

patterns make this possible:

GCS-aware I/O helpers (

read_csv_any,save_model_any): These functions check whether a path starts withgs://. If it does, they use thegoogle-cloud-storageclient to read or write. If not, they use plain local file I/O. This means you can pass--train ./titanic_train.csvfor a local test and--train=gs://my-bucket/titanic_train.csvfor a cloud job without changing any code.AIP_MODEL_DIRenvironment variable: When Vertex AI runs a CustomTrainingJob withbase_output_dirset, it injectsAIP_MODEL_DIR(ags://path) into the container. The script reads this variable to decide where to save the model. Locally, the variable is unset, so it falls back to the current directory (.).

This “write once, run anywhere” approach means you can debug locally first (fast, free) and then submit the exact same script to Vertex AI (scalable, managed) with confidence.

Understanding the XGBoost Training Script

Take a moment to review

Intro_GCP_for_ML/scripts/train_xgboost.py. This is a

standard XGBoost training script — it handles preprocessing, training,

and saving a model. What makes it cloud-ready is that it also supports

GCS (gs://) paths and adapts to Vertex AI conventions

(e.g., AIP_MODEL_DIR), so the same script runs locally or

as a managed training job without changes.

Try answering the following questions:

- Data preprocessing: What transformations are applied to the dataset before training?

-

Training function: What does the

train_model()function do? Why print the training time? -

Command-line arguments: What is the purpose of

argparsein this script? How would you change the number of training rounds? - Handling local vs. GCP runs: How does the script let you run the same code locally, in Workbench, or as a Vertex AI job? Which environment variable controls where the model artifact is written?

-

Training and saving the model: What format is the

dataset converted to before training, and why? How does the script save

to a local path vs. a

gs://destination?

After reviewing, discuss any questions or observations with your group.

-

Data preprocessing: The script fills missing values

(

Agewith median,Embarkedwith mode), maps categorical fields to numeric (Sex→ {male:1, female:0},Embarked→ {S:0, C:1, Q:2}), and drops non-predictive columns (PassengerId,Name,Ticket,Cabin). -

Training function:

train_model()constructs and fits an XGBoost model with the provided parameters and prints wall-clock training time. Timing helps compare runs and make sensible scaling choices. -

Command-line arguments:

argparselets you set hyperparameters and file paths without editing code (e.g.,--max_depth,--eta,--num_round,--train). To change rounds:python train_xgboost.py --num_round 200 -

Handling local vs. GCP runs:

-

Input: You pass

--trainas either a local path (train.csv) or a GCS URI (gs://bucket/path.csv). The script automatically detectsgs://and reads the file directly from Cloud Storage using the Python client. -

Output: If the environment variable

AIP_MODEL_DIRis set (as it is in Vertex AI CustomJobs), the trained model is written there—often ags://path. Otherwise, the model is saved in the current working directory, which works seamlessly in both local and Workbench environments.

-

Input: You pass

-

Training and saving the model: The training data is

converted into an XGBoost

DMatrix, an optimized format that speeds up training and reduces memory use. The trained model is serialized withjoblib. When saving locally, the file is written directly to disk. If saving to a Cloud Storage path (gs://...), the model is first saved to a temporary file and then uploaded to the specified bucket.

Before scaling training jobs onto managed resources, it’s essential to test your training script locally. This prevents wasting GPU/TPU time on bugs or misconfigured code. Skipping these checks can lead to silent data bugs, runtime blowups at scale, inefficient experiments, or broken model artifacts.

Sanity checks before scaling

- Reproducibility – Do you get the same result each time? If not, set seeds controlling randomness.

- Data loads correctly – Dataset loads without errors, expected columns exist, missing values handled.

- Overfitting check – Train on a tiny dataset (e.g., 100 rows). If it doesn’t overfit, something is off.

- Loss behavior – Verify training loss decreases and doesn’t diverge.

- Runtime estimate – Get a rough sense of training time on small data before committing to large compute.

- Memory estimate – Check approximate memory use to choose the right machine type.

- Save & reload – Ensure model saves, reloads, and infers without errors.

Download data into notebook environment

Sometimes it’s helpful to keep a copy of data in your notebook VM for quick iteration, even though GCS is the preferred storage location. For example, downloading locally lets you test your training script without any GCS dependencies, making debugging faster. Once you’ve verified everything works, the actual Vertex AI job will read directly from GCS.

Local test run of train_xgboost.py

Running a quick test on the Workbench notebook VM is cheap — it’s a lightweight machine that costs only ~$0.19/hr. The real cost comes later when you launch managed training jobs with larger machines or GPUs. Think of your notebook as a low-cost controller: use it to catch bugs and verify logic before spending on cloud compute.

As you gain confidence, you can skip the notebook VM entirely and run

these tests on your own laptop or lab machine — then submit jobs to

Vertex AI via the gcloud CLI or Python SDK from anywhere

(see Episode 8). That eliminates the

VM cost altogether.

- For large datasets, use a small representative sample of the total dataset when testing locally (i.e., just to verify that code is working and model overfits nearly perfectly after training enough epochs)

- For larger models, use smaller model equivalents (e.g., 100M vs 7B params) when testing locally

PYTHON

# Pin the same XGBoost version used by the Vertex AI prebuilt container

# (xgboost-cpu.2-1) so local and cloud results are identical.

!pip install xgboost==2.1.0PYTHON

# Training configuration parameters for XGBoost

MAX_DEPTH = 3 # maximum depth of each decision tree (controls model complexity)

ETA = 0.1 # learning rate (how much each tree contributes to the overall model)

SUBSAMPLE = 0.8 # fraction of training samples used per boosting round (prevents overfitting)

COLSAMPLE = 0.8 # fraction of features (columns) sampled per tree (adds randomness and diversity)

NUM_ROUND = 100 # number of boosting iterations (trees) to train

import time as t

start = t.time()

# Run the custom training script with hyperparameters defined above

!python Intro_GCP_for_ML/scripts/train_xgboost.py \

--max_depth $MAX_DEPTH \

--eta $ETA \

--subsample $SUBSAMPLE \

--colsample_bytree $COLSAMPLE \

--num_round $NUM_ROUND \

--train titanic_train.csv

print(f"Total local runtime: {t.time() - start:.2f} seconds")Training on this small dataset should take <1 minute. Log runtime as a baseline. You should see the following output file:

-

xgboost-model— Serialized XGBoost model (Booster) via joblib; load withjoblib.load()for reuse.

Evaluate the trained model on validation data

Now that we’ve trained and saved an XGBoost model, we want to do the

most important sanity check:

Does this model make reasonable predictions on unseen

data?

This step: 1. Loads the serialized model artifact that was written by

train_xgboost.py 2. Loads a test set of Titanic passenger

data 3. Applies the same preprocessing as training 4. Generates

predictions 5. Computes simple accuracy

First, we’ll download the test data

PYTHON

blob = bucket.blob("titanic_test.csv")

blob.download_to_filename("titanic_test.csv")

print("Downloaded titanic_test.csv")Then, we apply the same preprocessing function used by our training script before applying the model to our data.

Note: The

importbelow treats the repo as a Python package. This works because we cloned the repo into/home/jupyter/and the directory contains an__init__.py. If you get anImportError, make sure your working directory is/home/jupyter/(run%cd /home/jupyter/first).

Note on test data: The training script internally splits its input data 80/20 for training and validation. The

titanic_test.csvfile we use here is a separate, held-out test set that was never seen during training — not even by the internal validation split. This gives us an unbiased measure of model performance.

PYTHON

import pandas as pd

import xgboost as xgb

import joblib

from sklearn.metrics import accuracy_score

from Intro_GCP_for_ML.scripts.train_xgboost import preprocess_data # reuse same preprocessing

# Load test data

test_df = pd.read_csv("titanic_test.csv")

# Apply same preprocessing from training

X_test, y_test = preprocess_data(test_df)

# Load trained model from local file

model = joblib.load("xgboost-model")

# Predict on test data

dtest = xgb.DMatrix(X_test)

y_pred = model.predict(dtest)

y_pred_binary = (y_pred > 0.5).astype(int)

# Compute accuracy

acc = accuracy_score(y_test, y_pred_binary)

print(f"Test accuracy: {acc:.3f}")You should see test accuracy in the range of 0.78–0.82. If accuracy is significantly lower, double-check that the test data downloaded correctly and that the preprocessing matches the training script.

Experiment with hyperparameters

Try changing NUM_ROUND to 200 and

re-running the local training and evaluation cells above. Does accuracy

improve? How does the runtime change? Then try

MAX_DEPTH = 6. What happens to accuracy — does the model

improve, or does it start overfitting?

Increasing NUM_ROUND from 100 to 200 may marginally

improve accuracy but roughly doubles runtime. Increasing

MAX_DEPTH from 3 to 6 lets trees capture more complex

patterns but can lead to overfitting on a small dataset like Titanic —

you may see training accuracy increase while test accuracy stays flat or

drops. This is why testing hyperparameters locally before scaling is

important.

Training via Vertex AI custom training job

Unlike “local” training using our notebook’s VM, this next approach launches a managed training job that runs on scalable compute. Vertex AI handles provisioning, scaling, logging, and saving outputs to GCS.

Which machine type to start with?

Start with a small CPU machine like n1-standard-4. Only

scale up to GPUs/TPUs once you’ve verified your script. See Compute

for ML for guidance.

Creating a custom training job with the SDK

Reminder: We’re using the Python SDK from a notebook here, but the same

aiplatform.CustomTrainingJobcalls work identically in a standalone.pyscript, a shell session, or a CI pipeline. You can also submit jobs entirely from the command line withgcloud ai custom-jobs create. See the callout in Episode 2 for more details.

We’ll first initialize the Vertex AI platform with our environment

variables. We’ll also set a RUN_ID and

ARTIFACT_DIR to help store outputs.

PYTHON

from google.cloud import aiplatform

import datetime as dt

RUN_ID = dt.datetime.now().strftime("%Y%m%d-%H%M%S")

ARTIFACT_DIR = f"gs://{BUCKET_NAME}/artifacts/xgb/{RUN_ID}/" # everything will live beside this

print(f"project = {PROJECT_ID}\nregion = {REGION}\nbucket = {BUCKET_NAME}\nartifact_dir = {ARTIFACT_DIR}")

# Staging bucket is only for the SDK's temp code tarball (aiplatform-*.tar.gz)

aiplatform.init(project=PROJECT_ID, location=REGION, staging_bucket=f"gs://{BUCKET_NAME}/.vertex_staging")What does

aiplatform.init()do?

aiplatform.init()sets session-wide defaults for the Vertex AI Python SDK. Every SDK call you make afterward (creating jobs, uploading models, querying metadata, etc.) will inherit these values so you don’t have to repeat them each time. The three arguments we pass here are:

Argument Purpose projectThe Google Cloud project that owns (and is billed for) all Vertex AI resources you create. locationThe region where jobs run and artifacts are stored (e.g., us-central1). Must match the region of any buckets or endpoints you reference.staging_bucketA Cloud Storage path where the SDK automatically packages and uploads your training code as a tarball (e.g., aiplatform-2025-01-15-…-.tar.gz). The training VM downloads this tarball at startup to run your script. We point it to a.vertex_stagingsubfolder to keep these temporary archives separate from your real data and model artifacts.You only need to call

aiplatform.init()once per notebook or script session. If you ever need to override a default for a single call (e.g., run a job in a different region), you can pass the argument directly to that method and it will take precedence.

A CustomTrainingJob

is the Vertex AI SDK object that ties together three things:

your training script, a container

image to run it in, and metadata such as a

display name. Think of it as a reusable job definition — it doesn’t

start any compute by itself. Only when you call job.run()

(next step) does Vertex AI actually provision a VM, ship your code to

it, and execute the script.

The code below creates a CustomTrainingJob that points

to train_xgboost.py, uses Google’s prebuilt XGBoost

training container (which already includes common dependencies like

google-cloud-storage), and sets a display_name

for tracking the job in the Vertex AI console.

Tip: If your script needs packages not included in the prebuilt container, you can pass a

requirementslist toCustomTrainingJob(e.g.,requirements=["scikit-learn>=1.3"]).

Prebuilt containers for training

Vertex AI provides prebuilt Docker container images for model training. These containers are organized by machine learning frameworks and framework versions and include common dependencies that you might want to use in your training code. To learn more about prebuilt training containers, see Prebuilt containers for custom training.

PYTHON

job = aiplatform.CustomTrainingJob(

display_name=f"{LAST_NAME}_xgb_{RUN_ID}",

script_path="Intro_GCP_for_ML/scripts/train_xgboost.py",

container_uri="us-docker.pkg.dev/vertex-ai/training/xgboost-cpu.2-1:latest",

)Version alignment: Notice that the container tag

xgboost-cpu.2-1matches thexgboost==2.1.0we installed locally. This is intentional — pinning the same library version in both environments ensures that local and cloud training produce identical results given the same data and random seed.

Finally, this next block launches the custom training job on Vertex

AI using the configuration defined earlier. We won’t be charged

for our selected MACHINE until we run the below code using

job.run(). For an n1-standard-4

running 2–5 minutes, expect a cost of roughly

$0.01–$0.02 — negligible, but

good to be aware of as you scale to larger machines. This marks the

point when our script actually begins executing remotely on the Vertex

training infrastructure. Once job.run() is called, Vertex

handles packaging your training script, transferring it to the managed

training environment, provisioning the requested compute instance, and

monitoring the run. The job’s status and logs can be viewed directly in

the Vertex AI Console under Training → Custom jobs.

If you need to cancel or modify a job mid-run, you can do so from the console or via the SDK by calling job.cancel(). When the job completes, Vertex automatically tears down the compute resources so you only pay for the active training time.

- The

argslist passes command-line parameters directly into your training script, including hyperparameters and the path to the training data in GCS. -

replica_count=1means we run a single training worker. Increase this for distributed training across multiple machines (e.g., data-parallel training with large datasets). -

base_output_dirspecifies where all outputs (model, metrics, logs) will be written in Cloud Storage. -

machine_typecontrols the compute resources used for training. - When

sync=True, the notebook waits until the job finishes before continuing, making it easier to inspect results immediately after training.

PYTHON

job.run(

args=[

f"--train=gs://{BUCKET_NAME}/titanic_train.csv",

f"--max_depth={MAX_DEPTH}",

f"--eta={ETA}",

f"--subsample={SUBSAMPLE}",

f"--colsample_bytree={COLSAMPLE}",

f"--num_round={NUM_ROUND}",

],

replica_count=1,

machine_type=MACHINE, # MACHINE variable defined above; adjust to something more powerful when needed

base_output_dir=ARTIFACT_DIR, # sets AIP_MODEL_DIR for your script

sync=True,

)

print("Model + logs folder:", ARTIFACT_DIR)This launches a managed training job with Vertex AI. It should take 2-5 minutes for the training job to complete.

Understanding the training output message

After your job finishes, you may see a message like:

Training did not produce a Managed Model returning None.

This is expected when running a CustomTrainingJob without

specifying deployment parameters. Vertex AI supports two modes:

-

CustomTrainingJob (research/development) – You

control training and save models/logs to Cloud Storage via

AIP_MODEL_DIR. This is ideal for experimentation and cost control. -

CustomTrainingJob with model registration (for

deployment) – You include

model_serving_container_image_uriandmodel_display_name, and Vertex automatically registers a Managed Model in the Model Registry for deployment to an endpoint.

In our setup, we’re intentionally using the simpler

CustomTrainingJob path without model registration. Your

trained model is safely stored under your specified artifact directory

(e.g., gs://{BUCKET_NAME}/artifacts/xgb/{RUN_ID}/), and you

can later register or deploy it manually when ready.

Monitoring training jobs in the Console

Why do I see both a Training Pipeline and a Custom Job? Under the hood,

CustomTrainingJob.run()creates a TrainingPipeline resource, which in turn launches a CustomJob to do the actual compute work. This is normal — the pipeline is a thin wrapper that manages job lifecycle and (optionally) model registration. You can monitor progress from either view, but Custom Jobs shows the most useful details (logs, machine type, status).

- Go to the Google Cloud Console.

- Navigate to Vertex AI > Training > Custom Jobs.

- Click on your job name to see status, logs, and output model artifacts.

- Cancel jobs from the console if needed (be careful not to stop jobs you don’t own in shared projects).

Visit the console to verify it’s running.

Navigate to Vertex AI > Training > Custom Jobs in the Google Cloud Console to view your running or completed jobs.

If your job fails

Job failures are common when first getting started. Here’s how to debug:

- Check the logs first. In the Console, click your job name → Logs tab. The error message is usually near the bottom.

-

Common failure modes:

- Quota exceeded — Your project may not have enough quota for the requested machine type. Check IAM & Admin > Quotas.

- Script error — A bug in your training script. The traceback will appear in the logs. Fix the bug and re-run locally before resubmitting.

-

Wrong container — Mismatched framework version or

CPU/GPU container. Verify your

container_uri. - Permission denied on GCS — The training service account can’t access your bucket. Check bucket permissions.

- Re-test locally with the same arguments before resubmitting to avoid burning compute time on the same error.

Training artifacts

After the training run completes, we can manually view our bucket using the Google Cloud Console or run the below code.

PYTHON

total_size_bytes = 0

for blob in client.list_blobs(BUCKET_NAME):

total_size_bytes += blob.size

print(blob.name)

total_size_mb = total_size_bytes / (1024**2)

print(f"Total size of bucket '{BUCKET_NAME}': {total_size_mb:.2f} MB")Training Artifacts → ARTIFACT_DIR

This is your intended output location, set via

base_output_dir.

It contains everything your training script explicitly writes. In our

case, this includes:

-

{BUCKET_NAME}/artifacts/xgb/{RUN_ID}/model/xgboost-model— Serialized XGBoost model (Booster) saved viajoblib; reload later withjoblib.load()for reuse or deployment.

System-Generated Staging Files

You’ll also notice files under .vertex_staging/ — one

timestamped tarball per job submission:

.vertex_staging/aiplatform-2026-03-04-05:51:20.248-aiplatform_custom_trainer_script-0.1.tar.gz

.vertex_staging/aiplatform-2026-03-04-05:53:28.009-aiplatform_custom_trainer_script-0.1.tar.gz

...Each time you call job.run(...), the SDK packages your

training script into a .tar.gz, uploads it here, and the

training VM downloads it at startup. These accumulate quickly — the

example above shows 19 archives from a single day of iteration. They are

safe to delete once the job finishes, and you can automate cleanup with

Object

Lifecycle Management rules (e.g., auto-delete objects in

.vertex_staging/ after 7 days).

To delete all staging files now, run:

This won’t affect your model artifacts under

artifacts/.

Evaluate the trained model stored on GCS

Now let’s compare the model produced by our Vertex AI job to the one we trained locally. This time, instead of loading from the local disk, we’ll load both the test data and model artifact directly from GCS into memory — the recommended approach for production workflows.

PYTHON

import io

# Load test data directly from GCS into memory

bucket = client.bucket(BUCKET_NAME)

blob = bucket.blob("titanic_test.csv")

test_df = pd.read_csv(io.BytesIO(blob.download_as_bytes()))

# Apply same preprocessing logic used during training

X_test, y_test = preprocess_data(test_df)

# Load the model artifact from GCS

MODEL_BLOB_PATH = f"artifacts/xgb/{RUN_ID}/model/xgboost-model"

model_blob = bucket.blob(MODEL_BLOB_PATH)

model_bytes = model_blob.download_as_bytes()

model = joblib.load(io.BytesIO(model_bytes))

# Run predictions and compute accuracy

dtest = xgb.DMatrix(X_test)

y_pred_prob = model.predict(dtest)

y_pred = (y_pred_prob >= 0.5).astype(int)

acc = accuracy_score(y_test, y_pred)

print(f"Test accuracy (model from Vertex job): {acc:.3f}")Compare local vs. Vertex AI accuracy

Compare the test accuracy from your local training run with the accuracy from the Vertex AI job. Are they the same? Why or why not?

The two accuracy values should be very close (within

~1–2 percentage points) but may not be byte-for-byte identical, even

though both runs use the same script, hyperparameters, data, and random

seed (seed=42).

Why? The subsample=0.8 and

colsample_bytree=0.8 settings randomly sample rows and

columns each boosting round. A seed guarantees determinism only within

the exact same library version, NumPy build, and

BLAS/LAPACK backend. The Workbench notebook VM and the prebuilt training

container ship different underlying numerical libraries (e.g., OpenBLAS

vs. MKL), so even with identical XGBoost versions the random sampling

sequence can diverge slightly — producing a different model and

therefore a small accuracy difference.

If you want exact reproducibility, set subsample=1.0 and

colsample_bytree=1.0 (no random sampling) or accept that

minor variation across environments is normal and expected in

practice.

Explore job logs in the Console

Navigate to Vertex AI > Training > Custom Jobs in the Google Cloud Console. Find your most recent job and click on it. Can you locate:

- The Logs tab showing your script’s

print()output? - The training time printed by

train_model()? - The output artifact path?

- Click your job name, then select the Logs tab (or

View logs link). Your script’s

print()statements — including train/val sizes, training time, and model save path — appear in the log stream. - Look for the line

Training time: X.XX secondsin the logs. This comes from thetrain_model()function intrain_xgboost.py. - The artifact path is shown in the log line

Model saved to gs://...and also appears in the job details panel under output configuration.

Looking ahead: when training takes too long

The Titanic dataset is tiny, so our job finishes in minutes. In your real work, you’ll encounter datasets and models where a single training run takes hours or days. When that happens, Vertex AI gives you two main levers:

Option 1: Upgrade to more powerful machine types - Use a larger machine or add GPUs (e.g., T4, V100, A100). This is the simplest approach and works well for datasets under ~10 GB.

Option 2: Use distributed training with multiple replicas - Split the dataset across replicas with synchronized gradient updates. This becomes worthwhile when datasets exceed 10–50 GB or single-machine training takes more than 10 hours.

We’ll explore both options hands-on in the next episode when we train a PyTorch neural network with GPU acceleration.

-

Environment initialization: Use

aiplatform.init()to set defaults for project, region, and bucket.

-

Local vs managed training: Test locally before

scaling into managed jobs.

-

Custom jobs: Vertex AI lets you run scripts as

managed training jobs using pre-built or custom containers.

-

Scaling: Start small, then scale up to GPUs or

distributed jobs as dataset/model size grows.

- Monitoring: Track job logs and artifacts in the Vertex AI Console.

Content from Training Models in Vertex AI: PyTorch Example

Last updated on 2026-03-05 | Edit this page

Overview

Questions

- When should you consider a GPU (or TPU) instance for PyTorch training in Vertex AI, and what are the trade‑offs for small vs. large workloads?

- How do you launch a script‑based training job and write all artifacts (model, metrics, logs) next to each other in GCS without deploying a managed model?

Objectives

- Prepare the Titanic dataset and save train/val arrays to compressed

.npzfiles in GCS. - Submit a CustomTrainingJob that runs a PyTorch script and

explicitly writes outputs to a chosen

gs://…/artifacts/.../folder. - Co‑locate artifacts:

model.pt(or.joblib),metrics.json,eval_history.csv, andtraining.logfor reproducibility. - Choose CPU vs. GPU instances sensibly; understand when distributed training is (not) worth it.

Initial setup

1. Open pre-filled notebook

Navigate to

/Intro_GCP_for_ML/notebooks/05-Training-models-in-VertexAI-GPUs.ipynb

to begin this notebook. Select the PyTorch environment

(kernel). Local PyTorch is only needed for local tests. Your

Vertex AI job uses the container specified by

container_uri (e.g., pytorch-xla.2-4.py310 for

CPU or pytorch-gpu.2-4.py310 for GPU), so it brings its own

framework at run time.

2. CD to instance home directory

To ensure we’re all in the same starting spot, change directory to your Jupyter home directory.

3. Set environment variables

This code initializes the Vertex AI environment by importing the Python SDK, setting the project, region, and defining a GCS bucket for input/output data.

PYTHON

from google.cloud import aiplatform, storage

client = storage.Client()

PROJECT_ID = client.project

REGION = "us-central1"

BUCKET_NAME = "doe-titanic" # ADJUST to your bucket's name

LAST_NAME = 'DOE' # ADJUST to your last name. Since we're in a shared account environment, this will help us track down jobs in the Console

print(f"project = {PROJECT_ID}\nregion = {REGION}\nbucket = {BUCKET_NAME}")

# initializes the Vertex AI environment with the correct project and location. Staging bucket is used for storing the compressed software that's packaged for training/tuning jobs.

aiplatform.init(project=PROJECT_ID, location=REGION, staging_bucket=f"gs://{BUCKET_NAME}/.vertex_staging") # store tar balls in staging folder Prepare data as .npz

Unlike the XGBoost script from Episode 4 (which handles preprocessing

internally from raw CSV), our PyTorch script expects pre-processed NumPy

arrays. We’ll prepare those here and save them as .npz

files.

Why .npz? NumPy’s .npz files are compressed

binary containers that can store multiple arrays (e.g., features and

labels) together in a single file:

-

Compact & fast: smaller than CSV, and one file

can hold multiple arrays (

X_train,y_train). -

Cloud-friendly: each

.npzis a single GCS object — one network call to read instead of streaming many small files, reducing latency and egress costs. -

Vertex AI integration: when you launch a training

job, GCS objects are automatically staged to the job VM’s local scratch

disk, so

np.load(...)reads from local storage at runtime. -

Reproducible: unlike CSV,

.npzpreserves exact dtypes and shapes across environments.

PYTHON

import pandas as pd

import io

import numpy as np

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler, LabelEncoder

# Load Titanic CSV (from local or GCS you've already downloaded to the notebook)

bucket = client.bucket(BUCKET_NAME)

blob = bucket.blob("titanic_train.csv")

df = pd.read_csv(io.BytesIO(blob.download_as_bytes()))

# Minimal preprocessing to numeric arrays

sex_enc = LabelEncoder().fit(df["Sex"])

df["Sex"] = sex_enc.transform(df["Sex"])

df["Embarked"] = df["Embarked"].fillna("S")

emb_enc = LabelEncoder().fit(df["Embarked"])

df["Embarked"] = emb_enc.transform(df["Embarked"])

df["Age"] = df["Age"].fillna(df["Age"].median())

df["Fare"] = df["Fare"].fillna(df["Fare"].median())

X = df[["Pclass","Sex","Age","SibSp","Parch","Fare","Embarked"]].values

y = df["Survived"].values

scaler = StandardScaler()

X = scaler.fit_transform(X)

X_train, X_val, y_train, y_val = train_test_split(

X, y, test_size=0.2, random_state=42)

np.savez("/home/jupyter/train_data.npz", X_train=X_train, y_train=y_train)

np.savez("/home/jupyter/val_data.npz", X_val=X_val, y_val=y_val)We can then upload the files to our GCS bucket.

PYTHON

# Upload to GCS

bucket.blob("data/train_data.npz").upload_from_filename("/home/jupyter/train_data.npz")

bucket.blob("data/val_data.npz").upload_from_filename("/home/jupyter/val_data.npz")

print("Uploaded: gs://%s/data/train_data.npz and val_data.npz" % BUCKET_NAME)Verify the upload by listing your bucket contents (same pattern as Episode 3):

Minimal PyTorch training script (train_nn.py) - local

test

Running a quick test on the Workbench notebook VM is cheap — it’s a lightweight machine that costs only ~$0.19/hr. The real cost comes later when you launch managed training jobs with larger machines or GPUs. Think of your notebook as a low-cost controller: use it to catch bugs and verify logic before spending on cloud compute.

As you gain confidence, you can skip the notebook VM entirely and run

these tests on your own laptop or lab machine — then submit jobs to

Vertex AI via the gcloud CLI or Python SDK from anywhere

(see Episode 8). That eliminates the

VM cost altogether.

- For large datasets, use a small representative sample of the total dataset when testing locally (i.e., just to verify that code is working and model overfits nearly perfectly after training enough epochs)

- For larger models, use smaller model equivalents (e.g., 100M vs 7B params) when testing locally

Find this file in our repo:

Intro_GCP_for_ML/scripts/train_nn.py. It does three things:

1) loads .npz from local or GCS paths (transparently

handles both) 2) trains a small neural network (a 3-layer MLP) with

early stopping 3) writes all outputs side‑by‑side (model + metrics +

eval history + training.log) to the folder specified by the

AIP_MODEL_DIR environment variable (set automatically by

Vertex AI via base_output_dir), falling back to the current

directory for local runs.

What’s inside train_nn.py? (Quick

reference)

You don’t need to understand every line of the PyTorch code for this workshop — the focus is on how to package and run any training script on Vertex AI. That said, here’s a quick orientation:

-

GCS helpers (top of file):

read_npz_any()andsave_*_any()functions detectgs://paths and use the GCS Python client automatically. This is the key pattern that makes the same script work locally and in the cloud. -

AIP_MODEL_DIR: Vertex AI sets this environment variable to tell your script where to write artifacts. The script reads it at the top ofmain(). -

Model: A small feedforward network

(

TitanicNet) — the architecture details aren’t important for this lesson. -

Early stopping: Training halts when validation loss

stops improving (controlled by

--patience). This saves compute time and cost on cloud jobs.

To test this code, we can run the following:

PYTHON

# configure training hyperparameters to use in all model training runs downstream

MAX_EPOCHS = 500

LR = 0.001

PATIENCE = 50

# local training run

import time as t

start = t.time()

# Example: run your custom training script with args

!python /home/jupyter/Intro_GCP_for_ML/scripts/train_nn.py \

--train /home/jupyter/train_data.npz \

--val /home/jupyter/val_data.npz \

--epochs $MAX_EPOCHS \

--learning_rate $LR \

--patience $PATIENCE

print(f"Total local runtime: {t.time() - start:.2f} seconds")NumPy version mismatch?

Reproducibility test